Q-FOG SSP and CCT

CYCLIC CORROSION TESTERS

Cyclic corrosion testing provides the best possible laboratory simulation of natural atmospheric corrosion. Research indicates that test results are similar to those obtained outdoors in resulting structure, morphology, and relative corrosion rates.

Q-FOG SSP model testers from Q-Lab can run traditional salt spray and Prohesion tests. Q-FOG CCT testers can also perform wet/dry cycling with saturating humidity.

Q-FOG chambers are available in two sizes to fulfill a wide range of testing requirements. Q-FOG cyclic corrosion testers are the simplest, most reliable, and easiest-to-use corrosion testers available.

Continuous and Cyclic Corrosion Testing

Conventional salt spray testing (continuous salt spray at 35 °

In cyclic corrosion testing, specimens are exposed to a series of different environments in a repetitive cycle that mimics the outdoors. Simple cycles, such as Prohesion, may consist of cycling between salt fog and dry conditions. More sophisticated automotive methods may call for multi-step cycles that incorporate humidity or condensation, along with salt spray and dry-off. See the Q-FOG brochure for more information.

Within one Q-FOG chamber, it is possible to cycle through a series of the most significant corrosion environments. Even complex test cycles can easily be programmed with the Q-FOG controller.

Models and Configurations

Q-FOG corrosion test chambers are available in three types. Q-FOG SSP and CCT testers can perform tests that do not require relative humidity control. Model SSP performs traditional salt spray and Prohesion tests. Model CCT performs salt spray, Prohesion, and 100% humidity to meet many cyclic automotive tests.

- Q-FOG SSP-600: conventional salt spray and Prohesion tests, approximate 600 liter chamber volume

- Q-FOG SSP-1100: conventional salt spray and Prohesion tests, approximate 1100 liter chamber volume

- Q-FOG CCT-600: conventional salt spray, Prohesion, and 100% humidity, approximate 600 liter chamber volume

- Q-FOG CCT-1100: conventional salt spray, Prohesion, and 100% humidity, approximate 1100 liter chamber volume

The Q-FOG CRH tester features enhanced test control and is covered in a separate page. See Q-FOG Specifications for comparative information, or see the Features Tab.

Model SSP for Conventional Salt Spray or Prohesion Tests

The Q-FOG SSP corrosion tester can perform numerous accelerated corrosion tests, including continuous salt spray (ASTM B117 and ISO 9227) and Prohesion (ASTM G85 Annex 5). Visit our standards search for more information on Q-FOG SSP capability.

Continuous salt spray exposures are widely specified for testing components and coatings for corrosion resistance. Applications include plated and painted finishes, aerospace and military components, and electrical/electronic systems.

The Prohesion test uses fast cycling, rapid temperature changes, a low humidity dry-off cycle, and a different corrosive solution to provide a more realistic test. Many researchers have found this test useful for industrial maintenance coatings.

Most of these tests are performed to particular specifications that are widely used for basic corrosion testing. They are typically run at an elevated temperature and do not incorporate a dry-off cycle. They require heated, humidified air for fog delivery.

Model CCT for Corrosion Research and Cyclic Automotive Tests

The Q-FOG CCT tester has all the advantages of the model SSP, but adds the flexibility of including 100% humidity. Visit our standards search for more information on Q-FOG CCT capability.

Automotive corrosion test methods typically call for exposing specimens to a repetitive cycle of salt spray, high humidity, low humidity dry-off, and ambient conditions. These test methods were originally developed as labor-intensive manual procedures. The multi-functional Q-FOG CCT corrosion tester is designed to perform these cyclic tests automatically in a single chamber. Additionally, the CCT model is able to run Copper-Accelerated Acetic-Acid Salt Spray (CASS) tests such as ASTM B368 or ISO 9227 CASS.

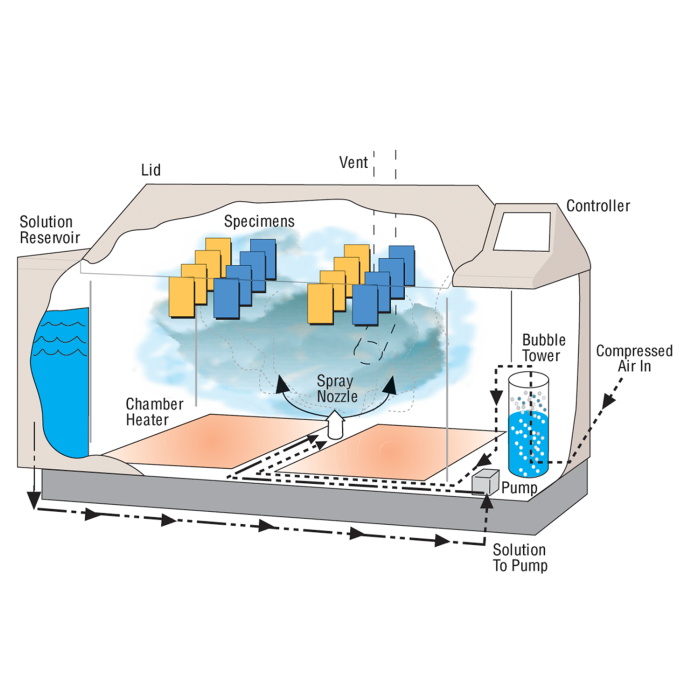

Precise Control of Fog Dispersion

The Q-FOG cyclic corrosion chamber has superior fog dispersion compared to conventional systems, which cannot vary volume and distance independently. A variable speed peristaltic pump controls the amount of corrosive solution delivered to the spray atomizer, while the air pressure regulator controls the distance of the “throw.” Note that purified water is required for proper operation of Q-FOG corrosion testers. See The Importance of Water Purity for more information.

Solution Reservoir

Space utilization is maximized and maintenance is minimized with the Q-FOG tester's internal solution reservoir. The 120 liter reservoir has enough capacity for running most tests for 7 days or more. The reservoir has an integral salt filter and a built-in alarm to alert the operator when the solution is low. The integrated reservoir can optionally be connected to an external tank.

Fast Cycling

Q-FOG testers can change temperatures exceptionally fast because of their unique internal chamber heater and their high volume cooling/dry-off blower. An additional air heater allows very low humidity dry-off exposures. Conventional chambers with water jackets cannot cycle rapidly because of the thermal mass of the water, nor can they produce low humidity.

Easy Programming and Specimen Mounting

Q-FOG SSP and CCT test chambers are designed to cycle between four conditions: Fog, Dry-Off, 100% Humidity (Model CCT only), and Dwell. Test conditions, time, and temperature are controlled by a built-in microprocessor. A remarkably simple user interface featuring dual, full-color touchscreens allows for easy user programming and operation in 17 user-selectable languages (English, French, Spanish, Italian, German, Chinese, Korean, Japanese, Czech, Dutch, Polish, Portuguese, Russian, Swedish, Thai, Turkish, and Vietnamese). The operator can quickly create new cycles, or run any of the pre-programmed cycles. The Q-FOG controller includes complete self-diagnostics, including warning messages, routine service reminders, and safety shut down.

An external USB port on every Q-FOG tester allows users to perform software upgrades quickly to address key performance issues. For quality systems that require documented proof of test conditions, this USB port can also be used to download tester performance history with Q-Lab's VIRTUAL STRIPCHART application. Data from the USB export can be emailed directly to Q-Lab's technical support desk for expert troubleshooting and diagnostics.

Q-FOG testers are equipped with a viewing window in the side of the lid and an internal light to allow easy monitoring of the test conditions. Q-FOG CRH testers have a low belt line and an easy-opening lid for easy specimen mounting.

Affordable

Q-FOG cyclic corrosion testers offer state-of-the-art corrosion testing technology, reliability, ease of operation, and easy maintenance – all at a remarkably affordable price.

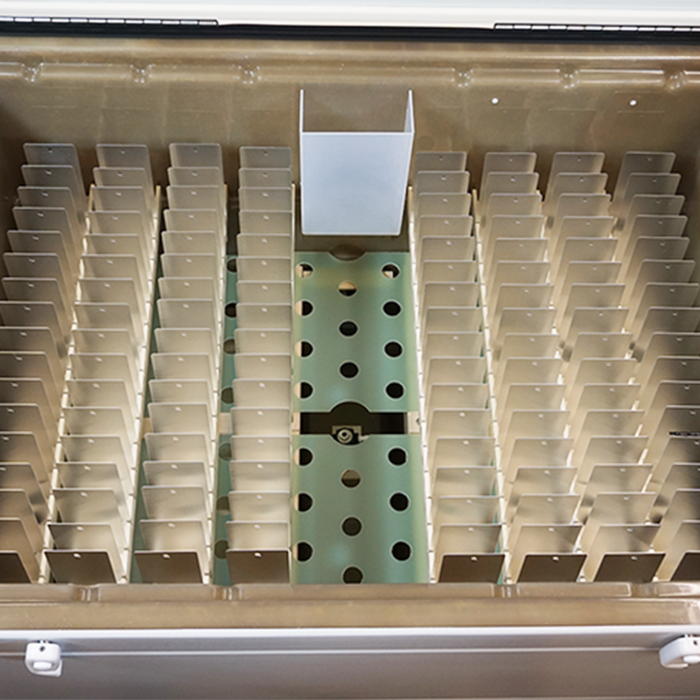

Specimen Holders

Optional test panel holders are available that allow the user to mount specimens at a 15°

Oddly-shaped parts, like large three-dimensional specimens, can be mounted on special 20 mm hanging rods.

For extremely large or heavy three-dimensional objects (such as metal wheel rims, engine parts, etc.), a rack-level or diffusion-level grate kit may be used. The Q-FOG tester’s sturdy construction can support a well-distributed total load of up to 544 kg (1200 lb.), ensuring compatibility with even the heaviest of automotive and other components.

Download our Q-FOG Specimen Mounting bulletin for more information on panel racks, rods, and grates.

Chamber Access Port

A test chamber access port is available for connecting specimens in the test chamber to external devices. Cables, wires, or hoses can be inserted through a hole in the front of the tester. This option is often used to keep electrical devices powered on during testing.

Other Accessories

Q-Lab offers a fog collection kit for the Q-FOG tester, which consists of a set of six graduated cylinders, o-rings, and collection funnels. Fog collection is required by some tests. Additionally, a kit to perform these collections external to the chamber is available, which avoids having to interrupt testing for fog measurement.

Also available is a one-year maintenance parts kit, which consists of eight pump tubes, one air filter, two solution filter elements, and a handy hex wrench.

A convenient salt kit is available, containing a pre-measured and certified quantity of NaCl (530 g), which allows for compatibility with ASTM B117. Just add the recommended amount of water to obtain a 5% solution.

Mass-loss coupons

Corrosion (or "mass-loss") coupons ensure repeatability and reproducibility when performing laboratory corrosion testing. They help a user independently monitor the test conditions in the chamber, by measuring the mass loss of the coupons as the test progresses. CX corrosion coupons are designed to meet the stringent requirements specified in modern corrosion test methods, such as GMW14872, GM9540P, SAE J2334, SAE J2721, ASTM B117, ISO 9227, and VDA-233-102.

All Q-PANEL CX-series corrosion coupons include a Certificate of Analysis, and come pre-cleaned and ready to use right out of the package. This allows the user to simply measure the panels and place them in the tester, saving time and effort. And best of all, CX corrosion coupons are often half the price of competitors’ coupons. Q-Lab mass loss coupons are offered in three varieties:

- CXB-12-K 1 × 2 in (25 × 51 mm) - meets requirements of GMW14872, 9540P, SAE J2334, J2721

- CXC-35-K 3 × 5 in (76 × 127 mm) - meets requirements of ASTM B117

- CXD-2.76-5.90-K 2.76 × 5.90 in (70 × 150 mm) meets requirements of ISO 9227, VDA-233-102, GB/T 10125

Visit our Corrosion Coupons page or download the Corrosion Coupons Specification Bulletin for more information.

Looking for additional information on the Q-FOG CRH? Browse our extensive document library for technical articles, technical bulletins, and more.

Resources

Document Library

Browse Q-Lab’s extensive library of corrosion testing literature and technical content.

Education

Check out blogs, case studies, articles, and webinars to build your knowledge of corrosion testing.

Standards

Review setup and performance information on key international and OEM test standards from ASTM, ISO, SAE, JIS, GB, and more.